The task is not the job

A supply-side answer to Amodei and Imas

A few days ago, Dario Amodei, CEO of Anthropic, went on Fox News and said that AI will eliminate up to half of all entry-level white-collar jobs within one to five years. He named finance, consulting, law, and tech. He has told versions of this story for a year, including in his recent essay “The Adolescence of Technology.” Mustafa Suleyman, CEO of Microsoft AI, went further when he said to the FT:

So white collar work, where you’re sitting down at a computer, either being a lawyer or an accountant or a project manager or a marketing person, most of those tasks will be fully automated by an AI within the next 12 to 18 months.

Assume for a moment they are right about the technology. Models keep improving, and tasks that used to need a junior analyst or programmer can now be done by a prompt. Does it follow that the jobs disappear?

There are two answers on offer. Alex Imas emphasises the demand side, in an excellent new essay I recommend. I want to offer here the supply side one, which my co-authors Jin Li and Yanhui Wu and I develop in our forthcoming book, Messy Jobs.

Imas asks: if AI makes a wide range of cognitive work cheap, where does spending go next? His answer: spending flows toward goods and services where the human origin is part of what customers buy.

When a sector becomes more productive, spending shifts away from it toward sectors with higher income elasticity. For instance, agriculture employed forty percent of the American workforce in 1900 and under two percent today. As societies get richer, they spend relatively less on food and more on other goods and services.

Human origin itself is, in some markets, part of the value. René Girard argued that human desire is mimetic: we want what others want, and we want it more when they cannot have it. Imas and Kristóf Madarász show experimentally that the winner pays a premium for possessing what others cannot. Imas and Graelin Mandel found that when the scarce good is produced by a machine, the exclusivity premium falls by half.

So as AI cheapens commodity production, real incomes rise and spending shifts toward what Imas calls the relational sector: teaching, care, hospitality, craft and live performance, where the human element is part of what is being bought. Imas concludes that the durable jobs of the future will be nurses, therapists, teachers, boutique fitness instructors, personal chefs, bespoke tailors, craft brewers, live performers, spiritual guides, childcare workers, and hospitality workers.

Many will find Imas’ list a bit frightening. If the relational sector is where human work survives, what happens to the hundreds of millions of people who are neither artisans nor caregivers? What will office workers, consultants, engineers, and middle managers do?

I think the relational shift is part of the story. But not the full answer. Many jobs survive because firms do not buy isolated tasks. They buy bundles. And because organizations do more than process information. They allocate authority and settle conflicts. AI can help with all of this. It does not follow that it can replace the people who do it.

Bundles

Most of the discussion of AI and labour markets starts from task exposure: if AI can perform more of the tasks in an occupation, that occupation should lose employment or earnings. But labour markets price jobs, not tasks.

A job is a bundle of tasks. The real question is not whether AI can perform one component of the bundle. It is whether that component can be separated from the rest at low cost, as we discuss in a recent working paper. When that separation is cheap, the bundle is weak: AI takes a piece, the human role narrows, and labor loses share. When separation is expensive, the bundle is strong: AI helps with part of the work, but the human still sells the full service and keeps the larger share of the revenue.

Many thought travel agents would be eliminated by online booking. As Ernie Tedeschi of Stripe Economics showed this month, travel agent employment is now more than 60% below its dot-com peak. For most of what agents used to do —searching flights, comparing hotel rates, issuing tickets— the bundle was weak. Separating the booking task from the human was cheap, and once it was cheap, the task was gone. But something else happened to the agents who stayed. They moved upmarket, charged planning fees, and joined luxury consortia that offer upgrades and personalized itineraries. In 2000, average weekly earnings at travel agencies were 87% of the private-sector average. By 2025, they had reached 99%. The surviving agents earn more per hour than they used to, precisely because the machine took the weak part and left them the strong one.

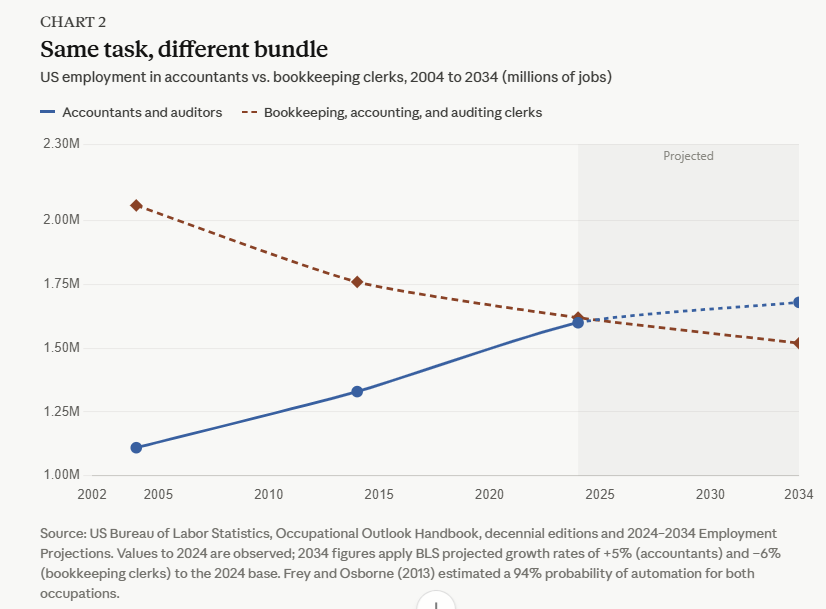

Most of the individual tasks that make up accounting involve the diligent completion of spreadsheets. These tasks individually seem easy to replace. In 2013, a study by Carl Frey and Michael Osborne put the probability that accountants and auditors would be automated at 94 percent. A decade later, the US Bureau of Labor Statistics counts 1.6 million accountants and auditors employed, median pay of $81,680, and projects the occupation to grow another 5 percent through 2034, faster than the average for all jobs. By contrast, the BLS category “bookkeeping, accounting, and auditing clerks” is falling, a projected 6 percent over the same decade. The clerical task, writing down transactions and reconciling ledgers, is a weak bundle. The accountant’s job is a strong bundle. She interprets tax law as it applies to a specific client, signs the audit that the bank and the SEC will rely on, and carries the legal exposure.

Three traits make a bundle stronger.

The first is unpredictable demand. If you could tell in advance which tasks a customer will need, you could assign each to the right worker, human or machine. In practice, you often cannot. How often have you placed a seemingly routine customer service call about a bug only for it to turn up a much thornier problem with your account? AI handles the routine well and the delicate poorly, and constant handoffs are expensive. My coauthor Yanhui Wu spent time in Hangzhou with a leading Chinese customer service firm that has deployed a domain-specific model for two years. Human agents are still indispensable on the two dimensions that matter most: recognising what the customer is not explicitly saying and avoiding the kind of interaction that ends up on social media. As one of their senior managers put it, unbundling those tasks from the routine ones would require “frequent switches between the AI and human modes. The coordination cost would be too high.”

The second is production spillovers. Some tasks belong together because doing one makes you better at the other. A radiologist who has already spoken with the patient and reviewed the clinical record reads the scan better than one who sees only the image.

The third is the measurement problem. Armen Alchian and Harold Demsetz pointed out that when several inputs jointly produce an output, their separate contributions are hard to disentangle. If AI drafts and a human signs, and the final product goes wrong, who is legally responsible? Banks, regulators, boards, and clients need someone to blame.

Where these conditions hold, AI may raise the productivity inside the bundle without replacing it. A nurse practitioner with AI diagnostic support may handle cases that used to require a doctor, or an entrepreneur with AI tools may run a company that used to require a team, without either being displaced.

Authority

Bundling understates the case. Organisations are not production functions. They are coalitions of people with legitimate but conflicting goals.

Managers exist to solve conflicts within the firm and to allocate scarce resources between competing ends: marketing would like to buy advertisements; engineers would like more tokens; lawyers would like to add processes; and the financial side would prefer to do more of everything with less money. They cannot all be satisfied at once. Someone has to decide who loses. The question is whether AI can play that role.

AI optimists say AI will do this too. Billions of AI agents will negotiate, draft contracts, and transact at machine speed. Hayek’s insight was that the price system aggregates enormous amounts of dispersed knowledge without anyone needing to understand the whole. If AI makes that system faster and smarter, why do we need managers at all?

Kenneth Arrow, in The Limits of Organization, argued that information is not a commodity like coffee: you cannot inspect it before you buy it, because once you have inspected it, you already have it. The people who hold information have reason to distort it, and what checks that distortion is not a better contract but trust—and trust cannot be traded:

Trust is an important lubricant of a social system: It is extremely efficient; it saves a lot of trouble to have a fair degree of reliance on other people’s word. Unfortunately this is not a commodity which can be bought very easily. If you have to buy it, you already have some doubts about what you’ve bought.

Trust in this sense is not a belief about competence. It is the expectation that the other party will bear a reputational cost if it defects, and will be around tomorrow to pay it.

Oliver Williamson added a second half. Once one party has made a relationship-specific investment, the other can hold it up. Before xAI built Colossus in Memphis, it could walk away to any site. Once it had sunk billions into the facility, the city could demand concessions, and did. A human manager carries a reputation with counterparties who will deal with her again. The firm can fire her if she fails. An AI agent has neither. It cannot be sued, cannot be replaced with fanfare to signal a reset.

There is also the problem of the unforeseen. Every project involves thousands of situations nobody specified in advance. A construction contract says the project must be delivered in March, but it does not say what happens when the electrician and the plumber both need access to the same wall on the same day. The manager resolves this by exercising what Oliver Hart and John Moore called residual decision rights: the authority to decide matters that contracts and processes have not specified in advance.

These residual decision rights are not a cognitive task, but an institutional one. The decider must hold tacit and relational knowledge the people around them will not share, because communicating it would give away their bargaining power. They must bear consequences, meaning they can be sued, fired, or publicly blamed when things go wrong. When a Sonos product launch fails, the CEO is fired, and the organisation moves on. They must confer legitimacy on the decision. This is why organisations hold so many meetings. Meetings are not information exchanges. They are rituals of procedural fairness. People accept decisions they dislike when the process looks legitimate and the decider is accountable for the result.

Could AI acquire this standing? In principle, yes. The machinery that lets human managers do this work, such as corporate registries, professional licensing, courts that can compel testimony, took centuries to build. No equivalents exist for AI. Until they do, some human will have to hold the residual decision rights.

Why Amodei is wrong

AI will not produce mass unemployment in rich economies that can fund the transition. Spending will shift toward work where humans add value. Labour share may fall in aggregate even as human-intensive sectors grow as a share of the economy.

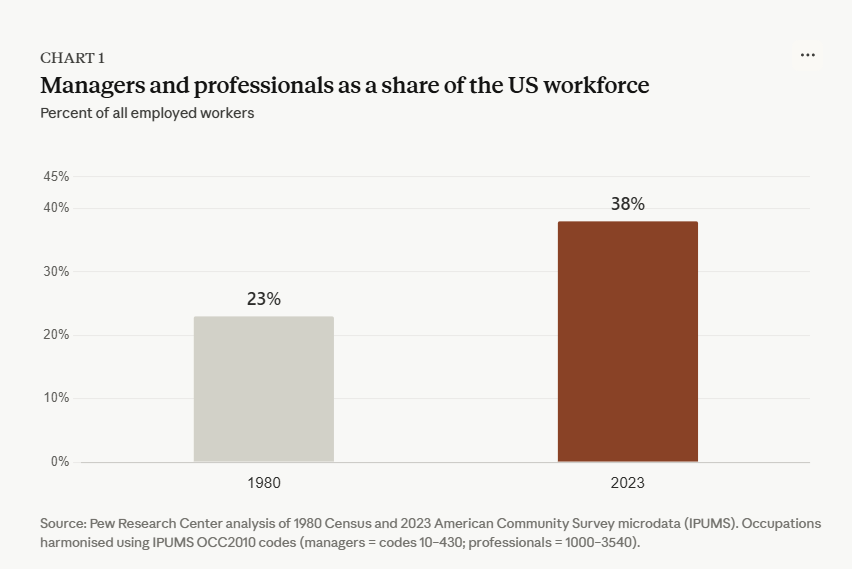

Imas thinks most surviving employment will be in the relational sector where customers demand human origin. I think much of the surviving employment will sit in strong-bundle, AI-augmented work and in the political-organizational core of firms. The future includes more therapists, tailors, personal trainers, and craft brewers, but also more managers whose value lies in handling ambiguity, integrating context, reconciling conflicting interests, and bearing the consequences of decisions.

The disruption of junior career ladders is real, and we have written about it. But the argument that “half of entry-level white-collar jobs be gone in five years” confuses task automation with the extinction of jobs. The real world is messy. The mess is what happens when human beings with competing interests try to get things done together. The economy Imas describes is the economy of what customers want. The economy I am describing is the economy of what organizations need to do. The second is larger.

These ideas draw on ongoing work with Jin Li and Yanhui Wu for our forthcoming book, Messy Jobs.

"The task is not the job" should be tattooed on the arms of every consultant currently selling AI transformation.

The travel agent data is striking — the surviving agents earn more 'because the machine took the weak part of the bundle and left them the strong one.' I used a parallel example from a different industry. The Swiss watch industry was nearly destroyed by quartz in the 1980s. Their comeback wasn't built on better timekeeping — it was built on redefining what a watch is for. Repositioning, not resistance. Your bundle framework explains why that worked: when the commodity task is gone, what remains is the part that was doing the real work.

Your Arrow quote on trust — "if you have to buy it, you already have some doubts about what you've bought" — is the best sentence I've read on why the authority question can't be automated away.

I published a piece recently on where new work appears as AI stalls at the edges — and a follow-up is coming shortly that asks whether those cracks add up to something livable or merely a service layer around machine capability. Your bundle framework sharpens that question considerably. I think we're circling the same territory from different altitudes.

The first piece is here if you're curious: https://rajeshachanta.substack.com/p/the-last-meter-economy. The second is in the works.

It will be a while before there s an AI chef who can taste the soup and say, "This needs more cumin." The same for a vintner. AI can see into the UV and IR, but no one is interested in taste and smell. AI people aren't interested.